The Swiss Bay blog

ZFS+TrueCrypt: best of both worlds

Posted on 10.1.17@1614 CEST

Table of contents

- Introduction

- Quick primer on ZFS

- TrueCrypt for ZFS

- Getting started

- Simulating data corruption

- Additional ressources

- Final thoughts

Introduction

In this very first blog post, we will be looking at how we can combine integrity and security for data.

TrueCrypt is a nice piece of software in that it allows you to create encrypted containers and even whole drives to easily store files in them. However, it does not provide any guarantee that no data corruption will ever occur. Indeed, its sole purpose is to encrypt data.

On the other hand, ZFS is a logical volume manager (LVM) + filesystem (FS) bundled together that has remarkable features, namely copy-on-write (COW), easy pool management, caching, snapshots (also called "snaps"), RAID-like topology and much more but most importantly data integrity. When used properly, ZFS does a really good job at keeping your data away from silent corruption and other nightmares.

What if we could have both at the same time ? Encryption and data integrity. Well, it turns out we can combine both softwares and obtain just that. Let's get going.

Quick primer on ZFS

ZFS is an LVM because it handles so-called vdevs (virtual devices) to form pools and an FS because you can create one out of a pool.

Basically, you can have a file, a partition or a whole drive act as a vdev. You can then assign it a given role (caching, write log, data store, spare drive) and use one or more to form the structure of your pool. If you want something like RAID-1, you'd create a mirror of two vdevs. For RAID-0, you'd create a stripe. There are also many other configurations possible.

Once you've got your pool set up, you probably want to create an FS on top of it (actually you create datasets (each being an FS) instead of just one single FS). Why ? Because without it, you wouldn't be able to benefit from snaps, quotas and some other features. Those are really cool to have, especially snaps as it allows you can easily revert a dataset to a previous state.

Now you've got yourself a robust data store with some very useful tools, but everything's still in plain.

TrueCrypt for ZFS

Normally, you format TrueCrypt volumes/drives with a given FS such as ext4, NTFS or FAT32. With ZFS though, it's best to use them as raw devices. In this case, TrueCrypt will just encrypt whatever binary data is written to the open (decrypted) volume. We will then use this to create one or more vdevs out of TrueCrypt to use with ZFS.

Getting started

Now we know how ZFS and TrueCrypt are going to work together, we can start actually setting up the combo.

Prerequisites

ZFS is available on Solaris and alike, BSD and alike and finally Linux. TrueCrypt in its latest official release 7.1a supports Windows, Linux and OS X. Therefore, ZFS+TrueCrypt should only work on Linux and OS X/macOS systems. Note that in this blog post, we will only cover Linux. You can try other systems but it might involve additional steps for the setup.

First, install ZFS

sudo apt update && sudo apt install zfsutils-linux

Then TrueCrypt

wget https://download.truecrypt.ch/current/truecrypt-7.1a-linux-x64.tar.gz

tar zxf truecrypt-7.1a-linux-x64.tar.gz

chmod +x truecrypt-7.1a-setup-x64.sh && ./truecrypt-7.1a-setup-x64.sh

Creating and mounting a raw TrueCrypt volume

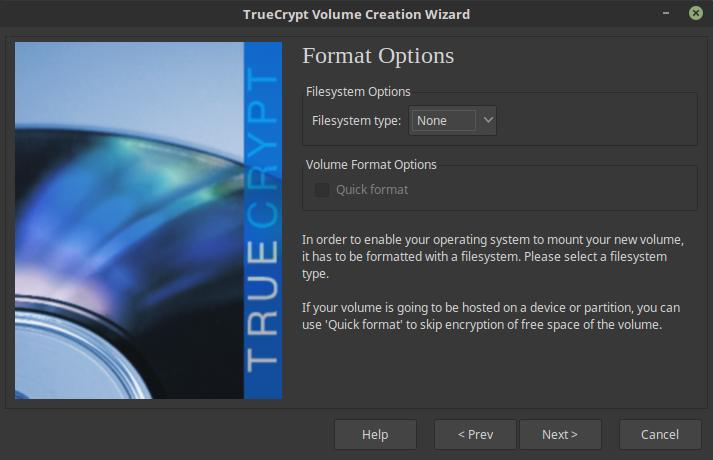

Follow the standard volume creation procedure, except that when asked about formatting you must choose "None":

Note that ZFS needs vdevs to be at least 64MB large, but it's best to use somewhat larger devices as otherwise you'll end up with a full pool very fast (which is bad in ZFS).

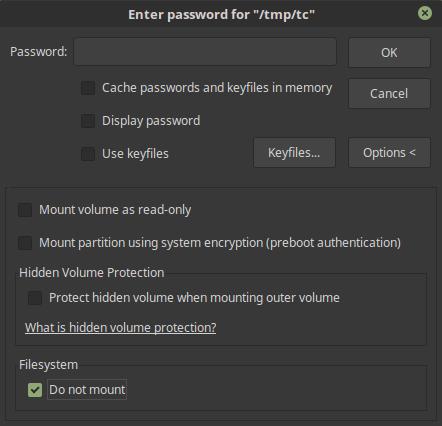

Once that's done, mount the volume but not as an FS:

You can now find it under /dev/mapper/truecryptX where X is the slot you chose in the previous step.

Now go over these steps one more time as we will need two volumes next.

Creating a ZFS pool

Assuming slot 1 and 2 are used, initialize a pool on the raw volumes:

zpool create -o ashift=9 secretpool mirror /dev/mapper/truecrypt1 /dev/mapper/truecrypt2

The -o ashift=9 parameter is to specifically set this pool's block size to 512 bytes (if you want 4K, use 12). It can only be set during the pool creation. The mirror keyword tells ZFS that data should be mirrored across the specified vdevs. In our case, it is a mirror of two vdevs.

We can now see our pool up:

$ zpool list

NAME SIZE ALLOC FREE EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

secretpool 112M 62K 112M - 0% 0% 1.00x ONLINE -

The status command shows in detail how the pool looks like (sorry for the broken indents):

$ zpool statuspool: secretpool

state: ONLINE

scan: none requested

config:

NAME STATE READ WRITE CKSUM

secretpool ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

truecrypt1 ONLINE 0 0 0

truecrypt2 ONLINE 0 0 0

errors: No known data errors

Setting pool parameters

You do not want compression with encryption. If you're on a budget for space, you probably shouldn't be looking into the Zettabyte File System.

zfs set compression=off secretpool

Explicitely use SHA-256 for checksums:

zfs set checksum=sha256 secretpool

Since we're on Linux, use POSIX ACLs and enable xattr:

zfs set acltype=posixacl secretpool

zfs set xattr=sa secretpool

Finally, let ACLs pass through:

zfs set aclinherit=passthrough secretpool

Optionally, change the mount point:

zfs set mountpoint=/some/fancy/mountpoint secretpool

Creating a dataset

As said above, we need datasets to fully embrace ZFS.

zfs create secretpool/data

Normally, only root can use it. To change this:

sudo chown -R user /secretpool

You now have a fully working zpool running on a mirror of encrypted TrueCrypt volumes. Let's put it to the test.

Gracefully closing everything

Unmounting the TrueCrypt volumes involves some steps beforehand.

First, cease all read/write operations from/to the pool. Then:

zpool export secretpool

You can now individually unmount your TrueCrypt volumes safely.

To remount everything, first bring TrueCrypt up and then:

zpool import secretpool

What this actually does is that is seeks any valid vdev that contains ZFS data. It then seeks which belong to the specified pool before mounting it.

Simulating data corruption

We can simulate data corruption by taking a vdev offline and changing some of its binary data. Before that, bring the pool back up and copy some files so that about 70% of it is used.

zpool offline secretpool truecrypt2

Proceed with said data corruption using your hex editor of preference, then:

zpool online secretpool truecrypt2

You can now see that ZFS did some resilvering:

$ zpool status secretpool -v

pool: secretpool

state: ONLINE

scan: resilvered 10.5K in 0h0m with 0 errors on Tue Jan 10 00:49:24 2017

config:

NAME STATE READ WRITE CKSUM

secretpool ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

truecrypt1 ONLINE 0 0 0

truecrypt2 ONLINE 0 0 0

errors: No known data errors

ZFS pools are checked for errors (and also repaired if any) by "scrubbing" them:

zpool scrub secretpool

Note that ZFS does not need to export (take offline) a pool to scrub it. This process can be executed live, though at some performance cost if you use ressources (i.e. data) in the pool.

A status command indeed shows that some data has been recovered from the other (healthy) volume:

pool: secretpool

state: ONLINE

status: One or more devices has experienced an unrecoverable error. An

attempt was made to correct the error. Applications are unaffected.

action: Determine if the device needs to be replaced, and clear the errors

using 'zpool clear' or replace the device with 'zpool replace'.

see: http://zfsonlinux.org/msg/ZFS-8000-9P

scan: repaired 384K in 0h0m with 0 errors on Tue Jan 10 00:56:59 2017

config:

NAME STATE READ WRITE CKSUM

secretpool ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

truecrypt1 ONLINE 0 0 0

truecrypt2 ONLINE 0 0 3

errors: No known data errors

Since everything is back to normal, we can clear the issue and check the status again:

zpool clear secretpool

pool: secretpool

state: ONLINE

scan: repaired 384K in 0h0m with 0 errors on Tue Jan 10 00:56:59 2017

config:

NAME STATE READ WRITE CKSUM

secretpool ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

truecrypt1 ONLINE 0 0 0

truecrypt2 ONLINE 0 0 0

errors: No known data errors

Notice how the CHKSUM colum changed for /dev/mapper/truecrypt2. Indeed, we told ZFS to clear all problems and set everything back to zero. The scan line shows the result of the latest scan, meaning it will not change even after issuing a zpool clear.

Additional ressources

Now you know how to set up a mirror of TrueCrypt volumes, you might want to deal with more complex configuration. Indeed, the power of ZFS comes from its more advanced features. Namely, you can make use of caching and pool architectures to improve read/write performance and use snaps, quotas as well as some other FS tools to make the most efficient use of your datasets.

However, explaining how to do that is beyond the scope of this blog post. If you wish to learn more about ZFS, this blog series is a must-read. It goes through pretty much all the details you need to know. There's one piece of information you still need to get though.

Pool naming

First, you might have noted that pool are mounted at the root of your FS (except if you changed some ZFS parameters). As such, names that are already in use (most likely "home", "root", etc.) must be avoided. ZFS should normally warn you if you try to overwrite existing paths.

You can always specifiy the mountpoint of a pool at import time:

zpool import -m /some/fancy/path secretpool

Similarly, the naming function is not injective (i.e. you may have two pools spread across multiple vdevs but with the same name). It goes without saying that you should try to name your pools uniquely. If you don't follow that advice for whatever reason, there are a couple things to remember:

- cannot create a pool whose name is equal that of a pool already mounted

- cannot import a pool by its name if more than one pool share the same name

Let's see how ZFS reacts when trigerring these situations. First, pool creation name collisions:

zpool create secretpool /dev/mapper/truecrypt3

cannot create 'secretpool': pool already exists

And mounting name collisions:

zpool import secretpool

cannot import 'secretpool': more than one matching pool

import by numeric ID instead

Creating pools with the same name is possible, but all pools with that name must be offline (exported).

For mounting, you must use the pool GUID. Using zdb, you can find it under pool_guid:

secretpool:

version: 5000

name: 'secretpool'

state: 0

txg: 7392

pool_guid: 16093900627180053822

Alternatively, you can use zpool import to show all pools that are mountable:

pool: secretpool

id: 2464308567901183139

state: ONLINE

action: The pool can be imported using its name or numeric identifier.

config:

secretpool ONLINE

truecrypt3 ONLINE

pool: secretpool

id: 9230289164394709987

state: ONLINE

action: The pool can be imported using its name or numeric identifier.

config:

secretpool ONLINE

mirror-0 ONLINE

truecrypt1 ONLINE

truecrypt2 ONLINE

Then:

zpool import 2464308567901183139

Once imported, commands such as zfs set and zpool offline will obviously only target the mounted "secretpool".

Final thoughts

This concludes this blog post on ZFS+TrueCrypt. This setup is actually pretty good, but some things are to keep in mind.

Namely, TrueCrpyt solely uses XTS as a block cipher mode of operation. It has been designed specifically for disk encryption, so if your vdevs are made out of TrueCrypt containers (and not whole disks/devices), then it makes no sense to use it as you will not benefit from its 512 bytes block size. Your FS already manages how your files' bytes are written to your disk's sectors. Instead, you should use an authenticated mode of operation such as GCM.

Additionally, XTS isn't that great. It also doesn't authenticate ciphertexts, which makes them prone to tampering. Just one more reason to use AE(AD) modes.

More native solutions using dm-crypt/LUKS are indeed possible, but these also use XTS and therefore don't have of an much advantage.

So in the end, both solutions are OK but it would be nicer to have a proper encryption software that uses AES w/ GCM or even better, ChaCha20 w/ Poly1305.

The next blog post will be dedicated to crypto when used by Web services and how one can harden them. It will cover the subject in much detail so that you can fully understand how things (should) work.